Whether it’s a scientific, industrial, or embedded camera, designing an imaging system goes far beyond simply choosing a sensor. A camera is in fact a complex system that must capture light, convert it into usable signals, process the resulting data, and then transmit it to an operating or visualization system.

This is commonly referred to as the image acquisition chain, which encompasses all the steps required to transform light from a scene into a usable image or video.

This chain can be described through four main functions: capturing and illuminating the scene, processing the information, managing and controlling the system, and finally transmitting the data.

Designing a camera involves making these different functions work together while meeting the constraints imposed by the final application.

CAPTURING LIGHT: SENSOR AND ILLUMINATION

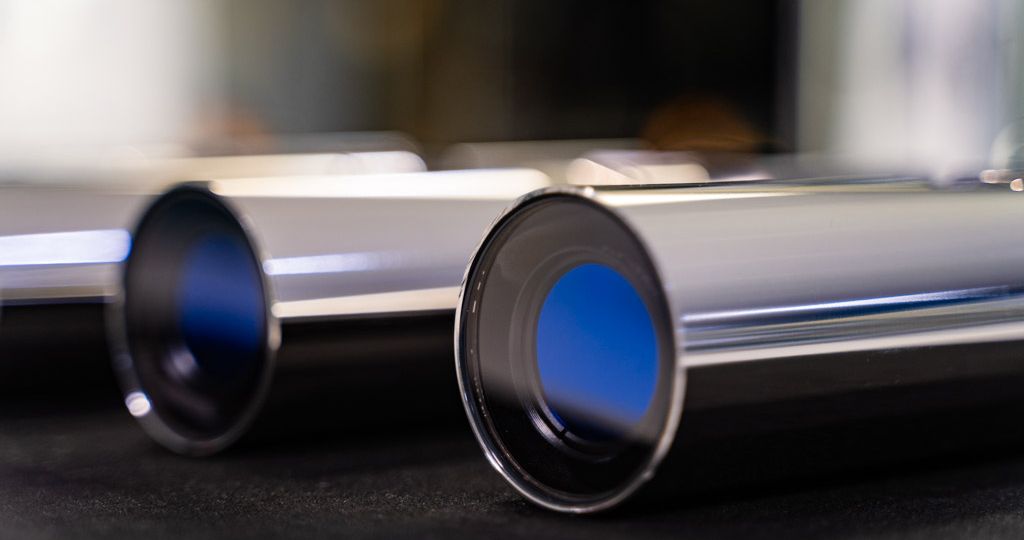

The first step in the acquisition chain is capturing light from the observed scene. This function relies on two key elements: the image sensor and the lighting.

When designing a camera, the choice of sensor is primarily driven by the requirements defined in the specifications. These take into account the target application and the expected system performance.

Several key parameters must be defined.

1. Resolution

Resolution determines the level of detail the camera can capture. Depending on the application, it may be necessary to observe very fine structures or, conversely, to prioritize fast acquisition over a wider area.

2. Sensor size and technology

Sensor size and technology are also critical factors. CMOS and CCD sensors each offer specific characteristics in terms of sensitivity, noise, power consumption, and readout speed. Pixel size directly impacts the sensor’s ability to collect light, and therefore its performance in low-light conditions.

3. Lighting conditions

The lighting conditions in which the camera will operate are also decisive. A camera designed for low-light environments, outdoor use under strong illumination, or underwater applications will require different technological choices.

4. Spectral range

Finally, the spectral range to be observed may vary depending on the application. Some cameras are designed for the visible spectrum only, while others must be sensitive to infrared or ultraviolet wavelengths.

All these parameters directly influence both sensor selection and lighting design. As a result, optics and illumination are typically defined first when establishing the system architecture.

At this stage, electronics are not yet the driving factor—they must adapt to these choices to ensure optimal operation of both the sensor and the lighting.

MANAGING AND CONTROLLING: THE CENTRAL ROLE OF ELECTRONICS

Once the sensor and lighting are defined, electronics play a central role in the system’s operation.

First, they must provide the sensor with the electrical conditions required for proper operation. This includes generating multiple, often highly specific power supplies, as well as managing control signals that drive pixel readout.

Electronics are also responsible for handling the signal output from the sensor. This signal may be analog or digital depending on the sensor architecture, and must be properly conditioned to preserve image quality.

Another key function is synchronizing the various system components. Image capture must be coordinated with lighting, especially in applications where illumination is pulsed or synchronized with the sensor’s exposure time.

Lighting itself also requires dedicated control. Electronics must regulate light intensity, manage lighting sequences, and sometimes monitor temperature to ensure stability and maximize the lifespan of light sources.

In this sense, electronics act as the true nervous system of the camera, coordinating all components.

IMAGE PROCESSING: TURNING SIGNALS INTO USABLE INFORMATION

The data produced by the sensor must then be converted into usable images. This corresponds to the embedded image processing stage.

Processing occurs at multiple levels. It may involve essential operations required for the camera to function, such as reconstructing a color image from raw sensor data, correcting defective pixels, or reducing noise.

In other cases, more advanced processing can be integrated directly into the camera to prepare data for its final use.

This stage often sits at the intersection of multiple constraints. Processing must adapt to sensor characteristics, lighting conditions, and output interface requirements. Available computing power, acceptable latency, and output bandwidth also influence architectural choices.

Depending on the application, these processes may be handled by embedded processors, FPGAs, or dedicated hardware.

DATA OUTPUT: TRANSMITTING THE IMAGE

The final step in the acquisition chain is transmitting images or video streams to the system that will use them.

This transmission can take different forms depending on the application. In some cases, it involves real-time video for an operator or visualization system. In others, images are sent to analysis or storage systems.

The choice of communication interface depends on several factors: required bandwidth, transmission distance, integration constraints, and link robustness.

This stage must be considered early in the design process, as it directly impacts both the electronic architecture and the camera’s processing capabilities.

DESIGN DRIVEN BY THE APPLICATION

Designing a camera is therefore the result of balancing multiple disciplines: optics, electronics, signal processing, and system architecture.

The specifications serve as the starting point for this process. They define the expected performance and operational constraints. Based on these requirements, each element of the acquisition chain must be designed consistently to ensure system quality and reliability.

Mechanical design also plays a critical role. It goes beyond simply protecting components: it ensures proper integration and alignment of optical and electronic elements, manages thermal dissipation, guarantees system robustness, and adapts the camera to its operating environment. Depending on the application, it may also include additional features such as mounting systems, environmental protection, or interfacing with other equipment.

Ultimately, camera design relies on a holistic system approach, where each technical domain must be considered in relation to the others. This overall coherence is what enables the development of high-performance imaging solutions tailored to demanding applications.